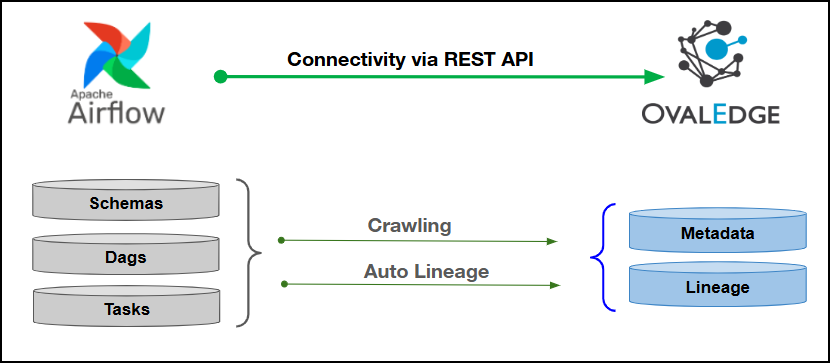

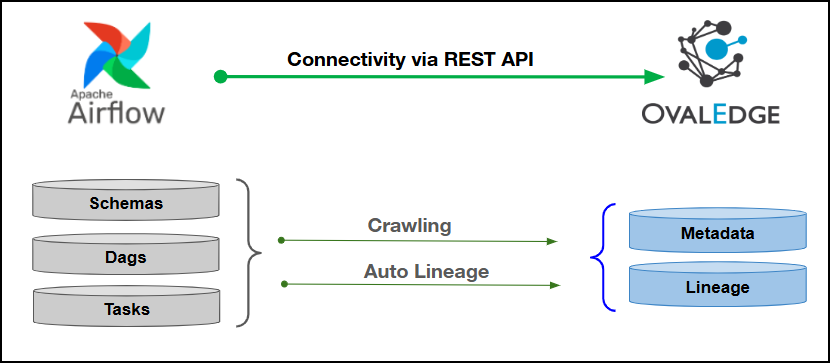

Connectivity

\[How the connection is established with Apache Airflow]

| REST APIs | | Verified Apache Airflow Version | Version V2 | {% hint style="info" %} The Apache Airflow connector validates with the listed “Verified Apache Airflow Version” and supports other compatible versions. Submit a support ticket for any validation or metadata crawling issues. {% endhint %} ### **Connector Features**| Feature | Availability |

|---|---|

| Crawling | ✅ |

| Delta Crawling | NA |

| Profiling | NA |

| Query Sheet | NA |

| Data Preview | NA |

| Auto Lineage | ✅ |

| Manual Lineage | ✅ |

| Secure Authentication via Credential Manager | ✅ |

| Data Quality | NA |

| DAM (Data Access Management) | NA |

| Bridge | ✅ |

| Apache Airflow Object | Apache Airflow Attribute | OvalEdge Attribute | OvalEdge Category | OvalEdge Type |

|---|---|---|---|---|

| Dags | Dag | Code Name | Codes | Airflow_Dag |

| Tasks | Task | Code Name | Codes | Airflow_Task |

Fields marked with an asterisk (*) are mandatory for establishing a connection.

| Field Name | Description |

|---|---|

| Connector Type | By default, "Airflow" is displayed as the selected connector type. |

| Credential Manager* | Select the desired credentials manager from the drop-down list. Relevant parameters will be displayed based on the selection. Supported Credential Managers:

|

| License Add Ons |

|

| Connector Name* | Enter a unique name for the Apache Airflow connection (Example: "Airflowdb"). |

| Connector Environment | Select the environment (Example: PROD, STG) configured for the connector. |

| Connector Description | Enter a brief description of the connector. |

| Server* | Enter the server name or IP address of the Apache Airflow database. (Example: http://airflow-prod-7fxxxx.us-east-1.aws.airflowcloud.com/). |

| Local DAG path | Specifies whether to use a local file system path for storing DAG files instead of a remote or shared location. Select Yes to Use a local directory for DAG storage and No, to use the default or remote DAG storage location configured in Airflow. |

| Username* | Enter the username required to access the Apache Airflow Database server (Example: "oesauser"). |

| Password* | Enter the password associated with the provided username to access the Apache Airflow Database. |

| Proxy Enabled* | It specifies whether the connector should route its requests through a proxy server. |

| Plugin Server | Enter the server name when running this as a plugin. |

| Plugin Port | Enter the port number on which plugin is running. |

| Default Governance Roles* | Select the appropriate users or teams for each governance role from the drop-down list. All users configured in the security settings are available for selection. |

| Admin Roles* | Select one or more users from the dropdown list for Integration Admin and Security & Governance Admin. All users configured in the security settings are available for selection. |

| No Of Archive Objects* | This shows the number of recent metadata changes to a dataset at the source. By default, it is off. To enable it, toggle the Archive button and specify the number of objects to archive. Example: Setting it to 4 retrieves the last four changes, displayed in the 'Version' column of the 'Metadata Changes' module. |

| Select Bridge* | If applicable, select the bridge from the drop-down list. The drop-down list displays all active bridges that have been configured. These bridges facilitate communication between data sources and the system without requiring changes to firewall rules. |

| S.No. | Error Message(s) | Error Description & Resolution |

|---|---|---|

| 1 | Crawling is a mandatory step before building a lineage. | Error Description: This error occurs when a lineage build is initiated without performing the required crawling step to collect metadata. Resolution: First, run the crawling process for the dataset or source, then proceed to build the lineage. |